This post was written by Katharina Brown, a FSI Global

Policy Intern on NTI’s Scientific and Technical Affairs team. Katharina is a

senior at Stanford University studying Computer Science with a focus on

Artificial Intelligence.

Today’s popular discussion of

artificial intelligence (AI) issues reflects the need to debate the

implications of new AI-driven technologies, like lethal autonomous weapons

systems or self-driving cars, before they become widely adopted. Although there

hasn’t been as much public debate on AI and machine learning (ML) in the

nuclear world, new ML research has the potential to disrupt the nuclear field,

and the security implications deserve discussion.

- In July 2018, researchers at the University of

Tokyo published a tool for

predicting the direction of radioactive material dispersion. Using datasets of

near-surface wind conditions labeled with correct directions determined by a

meteorological simulation, they trained a support vector machine (SVM) to

classify examples into four discrete directions.

A SVM extrapolates data into higher dimensions until it finds a clear boundary

between two categories, making it a useful tool for classification tasks like

this one. This approach had an average success rate of 85%, and was accurate in

predicting conditions up to 33 hours in advance. The researchers cited the

government’s struggle to respond to the 2011 Fukushima Daiichi Nuclear Power

Plant disaster as motivation for their work and expressed their hope that Japan

would adopt a similar model to inform crisis management, in order to make

better decisions on when and how to evacuate areas affected by a future release

of radiation.

- In 2017, researchers at Purdue University

published a new pipeline for

detection of tiny cracks in underwater surfaces at nuclear power plants. Using

a set of videos of underwater components, the approach aggregates data from

multiple frames of the video, uses a neural network

to identify potential cracks, and finally applies a probabilistic test to

filter out false positives caused by smudges, welds, or other normal features

of the equipment. This paper is only one example of the various machine

learning approaches

to fault detection in nuclear reactors published over the last two years.

These innovations are currently

academic projects, not yet solutions that have been adopted by industry or

government entities. That’s why now is the time to consider the security

concerns of ML approaches—before we ask technologies like these to inspect a nuclear

power plant or protect people from radiation dispersion.

ML security is distinct from

computer security because the fundamental characteristic of ML—using what’s

already been seen to deal with new problems—makes it a target of some unique

forms of exploitation. Attacking an ML system doesn’t require cracking

passwords or stealing data; it’s possible to generate malicious input

perfectly tailored to trick an ML system, even when the target’s algorithm and

training data are unknown. Even models that achieve high rates of

accuracy on a representative test data set can produce unexpected results when

they encounter data that’s deliberately manipulated or simply unusual. The more

we entrust critical issues to ML systems, the more serious the possible safety

or security consequences of mistakes by the system could be.

The offensive-defensive race to

control ML systems does not yet have a clear winner. Innovative techniques are

being developed to generate attacks automatically, as well as to improve

robustness against these threats. Even more importantly for critical

applications, researchers are investigating ways to verify ML models, or guarantee

a certain level of performance even against adversarial inputs. This dynamic research area deserves attention

and support, especially from industries that have an interest in adopting ML

for safety- or security-sensitive work.

Rather than wait for the world to

be transformed by AI in the distant future, we should pay careful attention to

shaping the field’s progress in a more secure direction, especially when it

comes to applications as vitally important as nuclear.

There

were six SVM’s, each one tasked with discriminating between only two of the

labels (a “one-versus-one” approach). An SVM works by finding the largest

possible margin separating two categories, so to account for more than two

categories as in this case, multiple SVMs are needed.

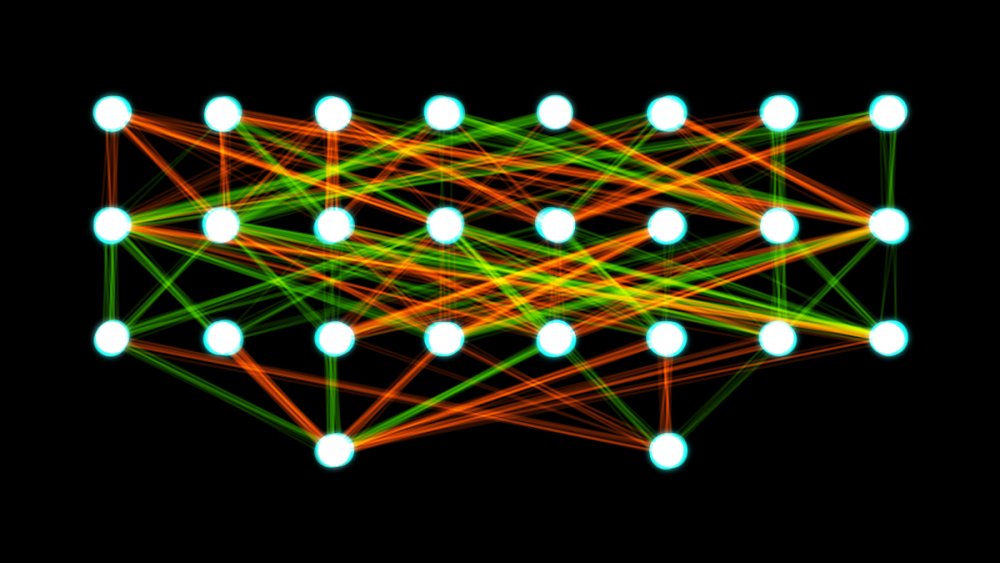

The type of neural network used, a convolutional neural network (CNN), is

commonly used in image processing. It works by repeatedly applying mathematical

transformations (“convolutions”) to the input in order to extract features that

can be used to identify similar images.

Of

course, ML systems can also be vulnerable to the same threats as any other

system – if the attacker does manage to steal the model’s training data, for

example, they have a significant advantage in crafting an attack.