Atomic Pulse

NTI Seminar: A Stable Nuclear Future? Autonomous Systems, Artificial Intelligence and Strategic Stability with UPenn’s Michael C. Horowitz

This post was written

by Taylor Sullivan, an intern on NTI’s Global Nuclear Policy Program team.

Taylor is a senior at Georgetown University majoring in Government with minors

in History and Economics.

As countries around the world develop artificial

intelligence capabilities, how will the international nuclear security

environment be impacted? NTI hosted

expert Michael C. Horowitz, a professor of Political Science at the University

of Pennsylvania, for a seminar on Nov. 9 that investigated the implications of

autonomous weapons systems on prospects of a stable nuclear future.

Following a detailed introduction of artificial intelligence

(AI) and its theoretical role in military technology, Horowitz identified key

factors that incentivize the development of AI for leaders of nuclear-capable

countries. These included the speed, precision, bandwidth, and decision-making

of autonomous systems. The speed of global conflict is faster than ever, Horowitz

said, and autonomous programs offer the ability to respond to threats faster

than human-run systems. Additionally, many experts argue that AI systems

provide greater accuracy because they are unaffected by human weaknesses, such

as emotion or error.

Though the United States has expressed a commitment to

maintaining a certain level of human control over its nuclear arsenal, other

countries including Russia, China, and North Korea have signaled their interest

in autonomous weapons, including uninhabited nuclear vehicles.

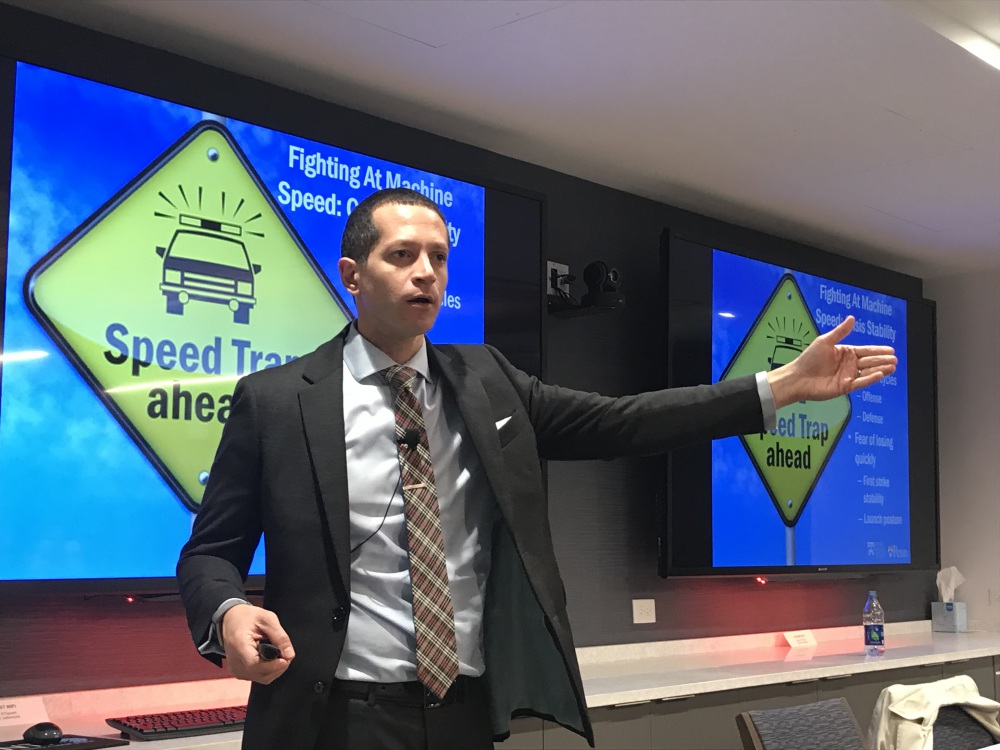

As these countries push for automatization, what are the

implications for the international nuclear security environment? Horowitz said:

- The less secure a country’s leader believes his or

her second-strike capabilities to be, the more likely that country is to employ

autonomous systems within its nuclear weapons complex. - There is “some risk associated with greater

automation in early warning systems.” Though autonomous early warning systems

can theoretically identify threats faster and more reliably, Horowitz warned

that potential downsides of such systems include the lack of human judgment,

the brittleness of algorithms, and increased false alarms. - There potentially is a “large risk associated

with the impact of conventional military uses of autonomy on crisis stability.”

Although fighting at machine speed can allow a country to win a conflict

faster, it also can lead to a quicker loss. This fear, according to Horowitz, could

incentivize leaders of nuclear countries to automate their first strike

capability.

Such discussions are necessary as more countries turn to AI

as the answer to their security vulnerabilities. Horowitz quoted Russian

President Vladimir Putin’s promise that AI is the future “for all of

humankind,” and that whoever leads in this technological race will become the

“ruler of the world.”

Stay Informed

Sign up for our newsletter to get the latest on nuclear and biological threats.

More on Atomic Pulse

Deep Fakes and Dead Hands: Artificial Intelligence’s Impact on Strategic Risk

Artificial Intelligence has many potential applications and consequences for strategic risk. Here is NTI’s work on it so far.

NTI at 20: Page Stoutland on Getting Ahead of Cyber Nuclear Threats

"It’s always difficult to adapt to new technologies, but the pace of development is so high that our organizations struggle to adapt."